I rather like inventions and engineering history, and I regularly go to the SME, a fair of 18th to 19th century innovation. I am generally impressed with how these machines work, but what really brings things out is when talented people use the innovation to do something radical. Case in point, the Gatling gun; invented by Richard J. Gatling in 1861 for use in the Civil war, it was never used there, or in any major war until 1898 when Lieut. John H. Parker (Gatling Gun Parker) showed how to deploy them successfully, and helped take over Cuba. Until then, they were considered another species of short-range, grape-shot cannon, and ignored.

Parker had sent his thoughts on how to deploy a Gatling gun in a letter to West Point, but they were ignored, as most new thoughts are. For the Spanish-American War, Parker got 4 of the guns, trained his small detachment to use them, and registered as a quartermaster corp in order to sneak them aboard ship to Cuba. Here follows Theodore Roosevelt’s account of their use.

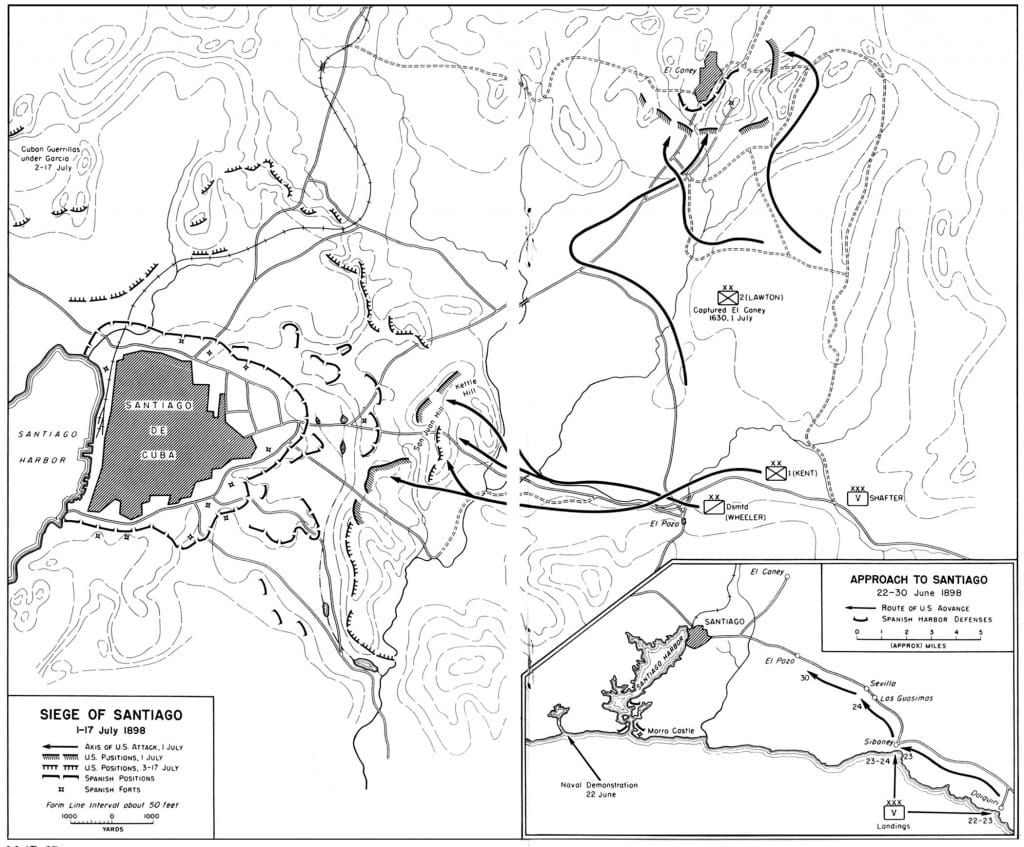

“On the morning of July 1st, the dismounted cavalry, including my regiment, stormed Kettle Hill, driving the Spaniards from their trenches. After taking the crest, I made the men under me turn and begin volley-firing at the San Juan Blockhouse and entrenchment’s against which Hawkins’ and Kent’s Infantry were advancing. While thus firing, there suddenly smote on our ears a peculiar drumming sound. One or two of the men cried out, “The Spanish machine guns!” but, after listening a moment, I leaped to my feet and called, “It’s the Gatlings, men! It’s our Gatlings!” Immediately the troopers began to cheer lustily, for the sound was most inspiring. Whenever the drumming stopped, it was only to open again a little nearer the front. Our artillery, using black powder, had not been able to stand within range of the Spanish rifles, but it was perfectly evident that the Gatlings were troubled by no such consideration, for they were advancing all the while.

Soon the infantry took San Juan Hill, and, after one false start, we in turn rushed the next line of block-houses and intrenchments, and then swung to the left and took the chain of hills immediately fronting Santiago. Here I found myself on the extreme front, in command of the fragments of all six regiments of the cavalry division. I received orders to halt where I was, but to hold the hill at all hazards. The Spaniards were heavily reinforced and they opened a tremendous fire upon us from their batteries and trenches. We laid down just behind the gentle crest of the hill, firing as we got the chance, but, for the most part, taking the fire without responding. As the afternoon wore on, however, the Spaniards became bolder, and made an attack upon the position. They did not push it home, but they did advance, their firing being redoubled. We at once ran forward to the crest and opened on them, and, as we did so, the unmistakable drumming of the Gatlings opened abreast of us, to our right, and the men cheered again. As soon as the attack was definitely repulsed, I strolled over to find out about the Gatlings, and there I found Lieut. Parker with two of his guns right on our left, abreast of our men, who at that time were closer to the Spaniards than any others.

From thence on, Parker’s Gatlings were our inseparable companion throughout the siege. They were right up at the front. When we dug our trenches, he took off the wheels of his guns and put them in the trenches. His men and ours slept in the same bomb-proofs and shared with one another whenever either side got a supply of beans or coffee and sugar. At no hour of the day or night was Parker anywhere but where we wished him to be, in the event of an attack. If a troop of my regiment was sent off to guard some road or some break in the lines, we were almost certain to get Parker to send a Gatling along, and, whether the change was made by day or by night, the Gatling went. Sometimes we took the initiative and started to quell the fire of the Spanish trenches; sometimes they opened upon us; but, at whatever hour of the twenty-four the fighting began, the drumming of the Gatlings was soon heard through the cracking of our own carbines.

Map of the Attack on Kettle Hill and San Juan Hill in the Spanish-American War, July 1, 1898 The Spanish had 760 troops n the in fortified positions defending the crests of the two hills, and 10,000 more defending Santiago. As Americans were being killed in “hells pocket” near the foot of San Juan Hill, from crossfire, Roosevelt, on the right, charged his men, the “Rough Riders” [1st volunteers] and the “Buffalo Soldiers [10th cavalry], up Kettle Hill in hopes of ending the crossfire and of helping to protect troops that would charge further up San Juan Hill. Parker’s Gatlings were about 600 yards from the Spanish and fired some 700 rounds per minute into the Spanish lines. Theyy were then repositioned on the hill to beat back the counter attack. Without the Parker’s Gatling guns, the chances of success would have been small.

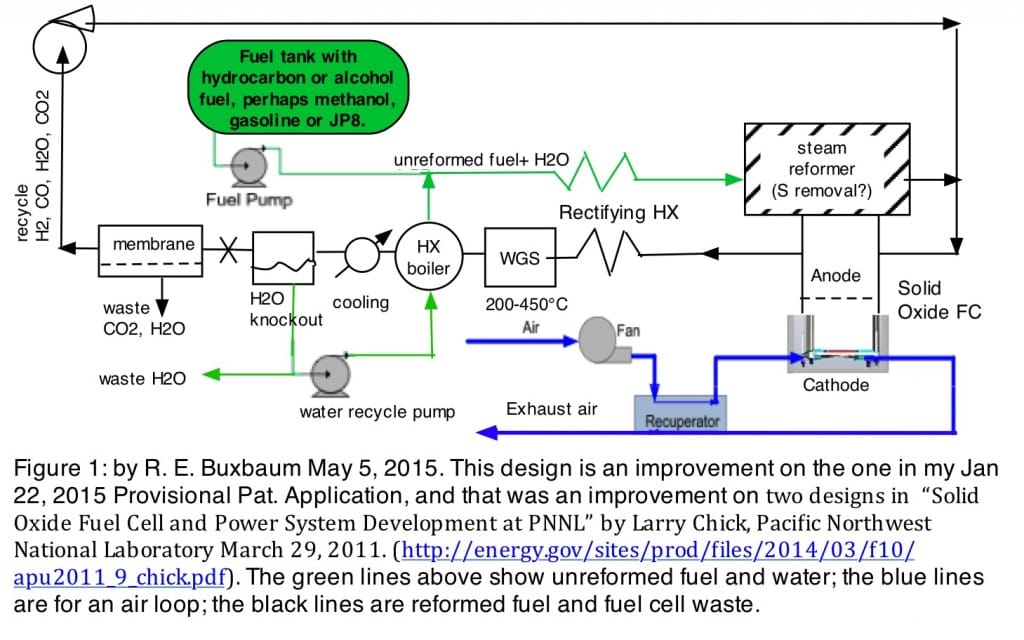

Here is how the Gatling gun works; it’s rather like 5 or more rotating zip guns; a pall pulls and releases the firing pins. Gravity feeds the bullets at the top and drops the shells out the bottom. Lt’ Parker’s deployment innovation was to have them hand-carried to protected positions, near-enough to the front that they could be aimed. The swivel and rapid fire of the guns allowed the shooter to aim them to correct for the drop in the bullets over fairly great distances. This provided rapid-fire accurate protection from positions that could not be readily hit. Shortly after the victory on San Juan HIll, July 1 1898, the Spanish Caribbean fleet was destroyed July 3, Santiago surrendered July 17, and all of Cuba surrendered 4 days later, July 21 (my birthday) — a remarkably short war. While TR may not have figured out how to use the Gatling guns effectively, he at least recognized that Lt. John Parker had.

Roosevelt gave two of these, more modern, Colt-Browning repeating rifles to Parker’s detachment the day after the battle. They were not particularly effective. By WWI, “Gatling Gun” Parker would be a general; by 1901 Roosevelt would be president.

The day after the battle, Col. Roosevelt gifted Parker’s group with two Colt-Browning machine guns that he and his family had bought, but had not used. According to Roosevelt, but these rifles, proved to be “more delicate than the Gatlings, and very readily got out-of-order.” The Brownings are the predecessor of the modern machine gun used in the Boxer Rebellion and for wholesale deaths in WWI and WWII.

Dr. Robert E. Buxbaum, June 9, 2015. The Spanish-American War was a war of misunderstanding and colonialism, but its effects, by and large, were good. The cause, the sinking of the USS Maine, February 15, 1898, was likely a mistake. Spain, a decaying colonial power, was a conservative monarchy under Alfonso XIII; the loss of Cuba seems to have lead to liberalization. The US, a republic, became a colonial power. There is an inherent friction, I think between conservatism and liberal republicanism, Generally, republics have out-gunned and out-produced other countries, perhaps because they reward individual initiative.